As a machine learning engineer it is very important to fit in the right algorithms both for classification and regression problems and ML engineers always face an issue as to what algorithm to choose out of so many???

This problem, however, gets resolved if we use K Fold Cross Validation, Grid Search, Randomized Search, and many other tuning methods. But, how to get the best accuracy out of these models is a very tedious task and requires a lot of hyperparameter tuning. What I am trying to convey is that the common machine learning models that we use like Decision tree, Random Forest, Linear Regression, Logistic Regression, SVM, Ridge, and Lasso, etc. have some or the other kind of drawback regarding the accuracy of the model. Also conversion of columns from string to numeric needs to be done manually with these kinds of algorithms and therefore consumes a lot of time and effort.

What if the accuracy and even the column conversions get automatically done and also we get a beautiful graph depicting the different evaluation metrics of our model???

Yes, it is possible with the help of a very powerful machine learning algorithm called Catboost. It is an abbreviation to Categorical Boosting and helps Data Scientists and ML/DL engineers to increase their performance metric and also automate their column transformations. The library was developed by Yandex and was made available both for Python and R programming language.

The import of this library is also very simple and can be done through normal pip installation in Python and install packages dev tools installation in R. Let’s take a look at the insights of this library and also learn how to implement the same using Python. So, let’s start:

Installation

Here we are talking about Python Programming language so the installation steps of Catboost in Python are given below:

pip install Catboost After the installation is done you can import this in any kind of text editor by just typing: from catboost import CatBoostRegressor for regression from catboost import CatBoostClassifier for classification

Principle Behind

The principle behind which this library works is Gradient Boosting. Yes you heard it right, this library works on top of gradient boosting and therefore the concept of achieving the global minima by the help of backpropagation and initiation of learning rate is followed. The weights and biases are updated at each iteration and the point of global minimum is achieved.

Advantages of Catboost over Other Algorithms

The advantages of Catboost over other machine learning algorithms are given below:

- Higher performance: With the help of this library many ML engineers out there solve their real-world problems and also win many competitions held at Kaggle, Analytics Vidhya, Driven Data, etc. Also, it eliminates the concept of overfitting because of its built-in mechanism and therefore helps ML engineers to ease down their task of tuning the model. Allows ML engineers to perform very less hyperparameter tuning because of its built and handles every model with perfection.

- Easily integrates with Python: As mentioned that the installation steps of this library are very less and come for both Python and R. Importing this library is very easy and also there is no system lag while performing ML operations with this library.

- Has the provision to handle categorical data: This library comes pre-built with the feature to handle categorical data and engineers don’t need to encode the categorical features manually.

- Has provision to specify hyperparameters of your choice: The library is made as such that it allows users to add multiple hyperparameters from the huge list this library provides and play around with it.

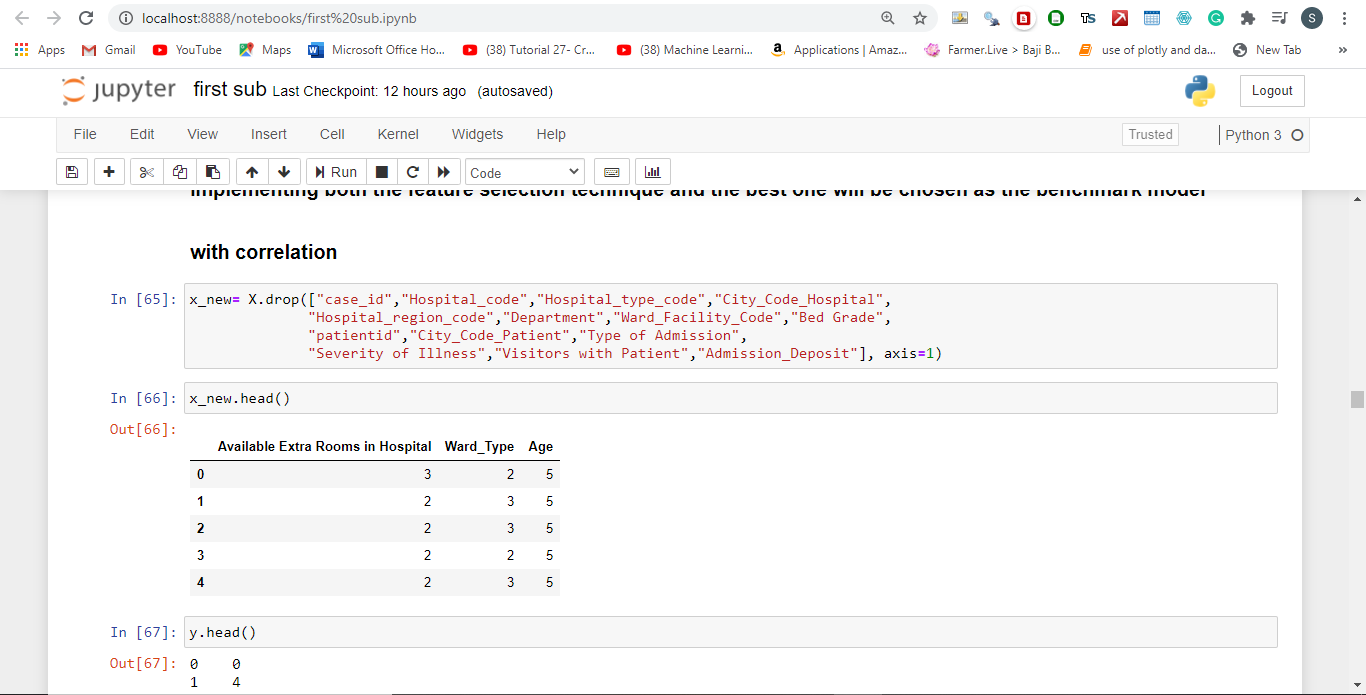

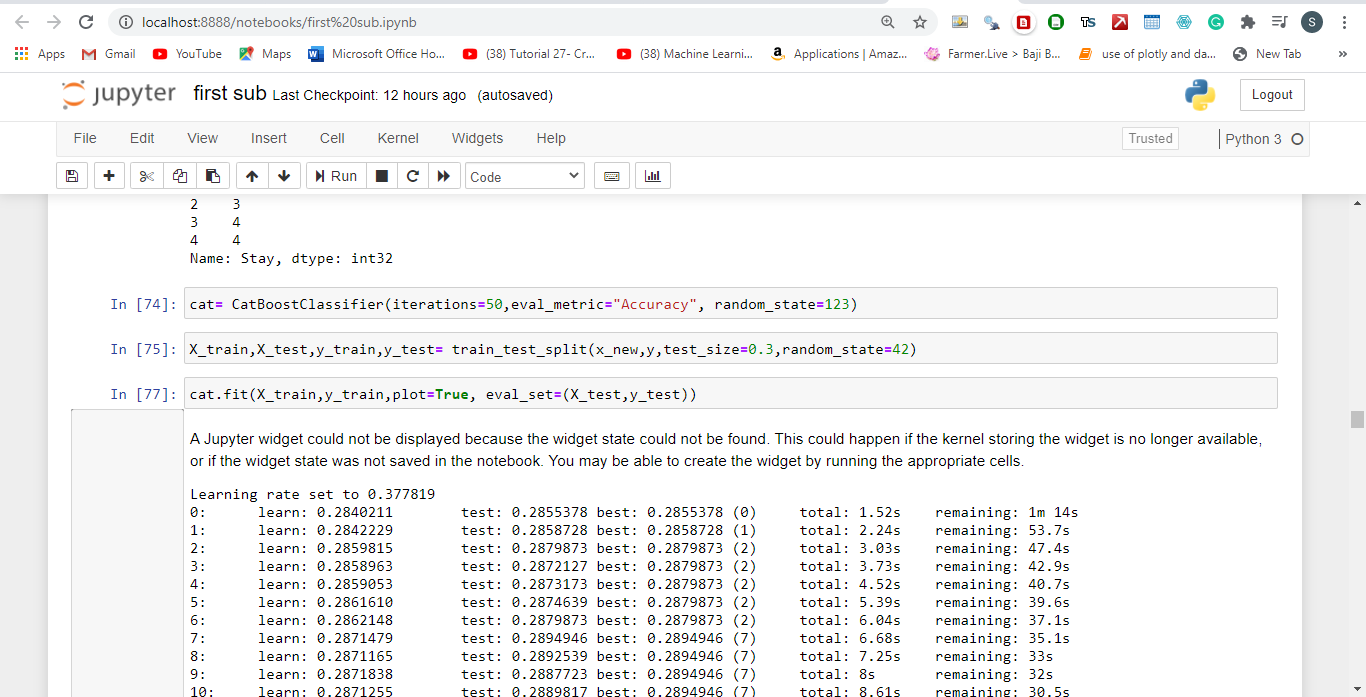

Practical Example

Related Posts

AirGo Vision- Solos’ Smart Glasses with AI Integration from ChatGPT, Gemini, and Claude

Rise of deepfake technology. How is it impacting society?

OpenAI’s Critic GPT- The New Standard for GPT- 4 Evaluation and Improvement

Claude 3.5 Takes the Lead- Why It’s Better Than GPT-4

Smartphone Apps Get Smarter- Meta AI’s Integration Across Popular Platforms

Free PDF Analysis Made Easy with ChatGPT