Working and creating a huge database with the help of MySQL is popular out there and people are using this Relational Database Management System to build more dynamic databases that are highly protected and visible to the concerned party only. To handle and create these databases there are many text editors including the inbuilt text editor provided by MySQL. But what if we want to integrate these databases with our programming language and want to manipulate the same using coding of programming language? Yes, it is possible with this powerful tool called Pandas.

This is a library of Python that is downloadable by pip. After downloading this library users find it very helpful in solving many real-world examples and making their life easy. Here I mean to say that through Pandas we can solve the problems associated with Relational Database Management. It provides a class known as Pymysql and sqlalchemy that together helps in solving SQL related queries and helps one to manipulate and play around with their data. Any kind of MYSQL data whether located in the cloud-like on AWS, Hostinger, Azure, etc. or located in the local system or some other system can be called with the help of these two classes. They are a very powerful and low space acquiring tool that can import databases into the Python Console.

Some things that can be performed after importing our data and how to import data are given below:

How to import the data?

- For using the features provided by Pandas we first need to have Pandas installed in our system. Once installed we can check the same by doing import pandas as pd in the Jupyter console.

- After Pandas is downloaded the next step is to download SQL alchemy and Pymysql for Python through the command prompt using pip, conda, sudo, etc. Once downloaded we are ready to use these for importing our MySQL data.

- For importing the MySQL data from anywhere we need the credentials that are the username and password along with the name of the database and feed it to the SQL alchemy engine.

- Once the data is fed to the engine the next step is to convert this data to a Pandas data frame and for this, we will use the DataFrame feature of Pandas, give a name to the data and then just run the console. This will import the data for us in the form of a data frame.

- Once the format is changed to the data frame we can now use it and manipulate the same and save our changes either as a separate xlsx, CSV, tsv, etc. or can directly save the changes back to our SQL data.

What can be done with the data?

Once the data is properly imported to our console we can create many things with the help of it like:

- Dashboards: We can use our SQL data to create interactive dashboards to allow users to properly visualize their data with the help of the Dash and Plotly library of Python.

- Pivot Table: Once the data is imported then we can also create Pivot tables to see the dependency of one feature with another and then making necessary inferences.

- Grouping the data: We can group the data into various categories based on one feature columns to see the amount of data contained separately by each metadata of that particular column.

- Concatenate: We can concatenate two or more data frames together and then save it as a separate data frame to get a better idea between the two data frames and also what is the impact one is having on another.

- Feature engineering: After the data is imported we can preprocess the same and then can use it for training purposes in Machine learning algorithms.

Many more things are there that can be performed with the SQL data i.e. every class Pandas library contains can be used in our data to make changes and save it.

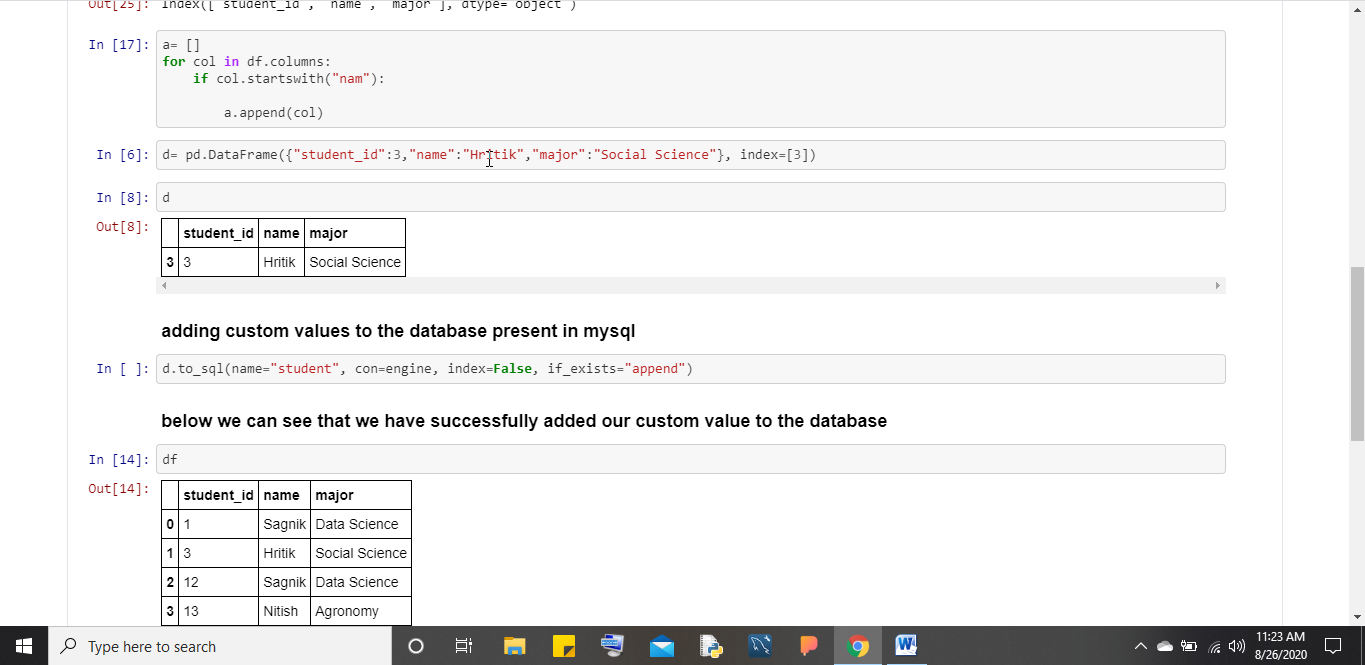

Pictorial Representation of SQL Alchemy with working

Conclusion

Use this Pandas library to call your Relational databases from anywhere just by knowing the credentials and database name. It is a helpful tool for people who are less familiar with the working of SQL data and also for those who want to use SQL data for visualization purposes or feed it to ML algorithms to get predictions.

Related Posts

How to Install SQL Server Express on Windows 11 using PowerShell or CMD

Finding Visual Studio Code Version on Windows 11 or 10

Running PHP Files in Visual Studio Code with XAMPP: A Step-by-Step Guide

Multiple Methods to Verify Python Installation on Windows 11

Single Command to install Android studio on Windows 11 or 10

How to Install and Use Github Copilot in JetBrains IntelliJ idea