Machine learning is a practice that is nowadays being followed by every scientist and researcher out there. This technology finds applications in many sectors of society like healthcare, medicines, agriculture, airlines, etc. To carry out machine learning we need to know a programming language say Python, R, Java, C++, etc. These languages contain each and every algorithm to run machine learning codes properly and use them in real-world scenarios. For every machine learning engineer out there Python is the most preferred language to write the algorithms and test it. This is due to fewer syntaxes and therefore saving time.

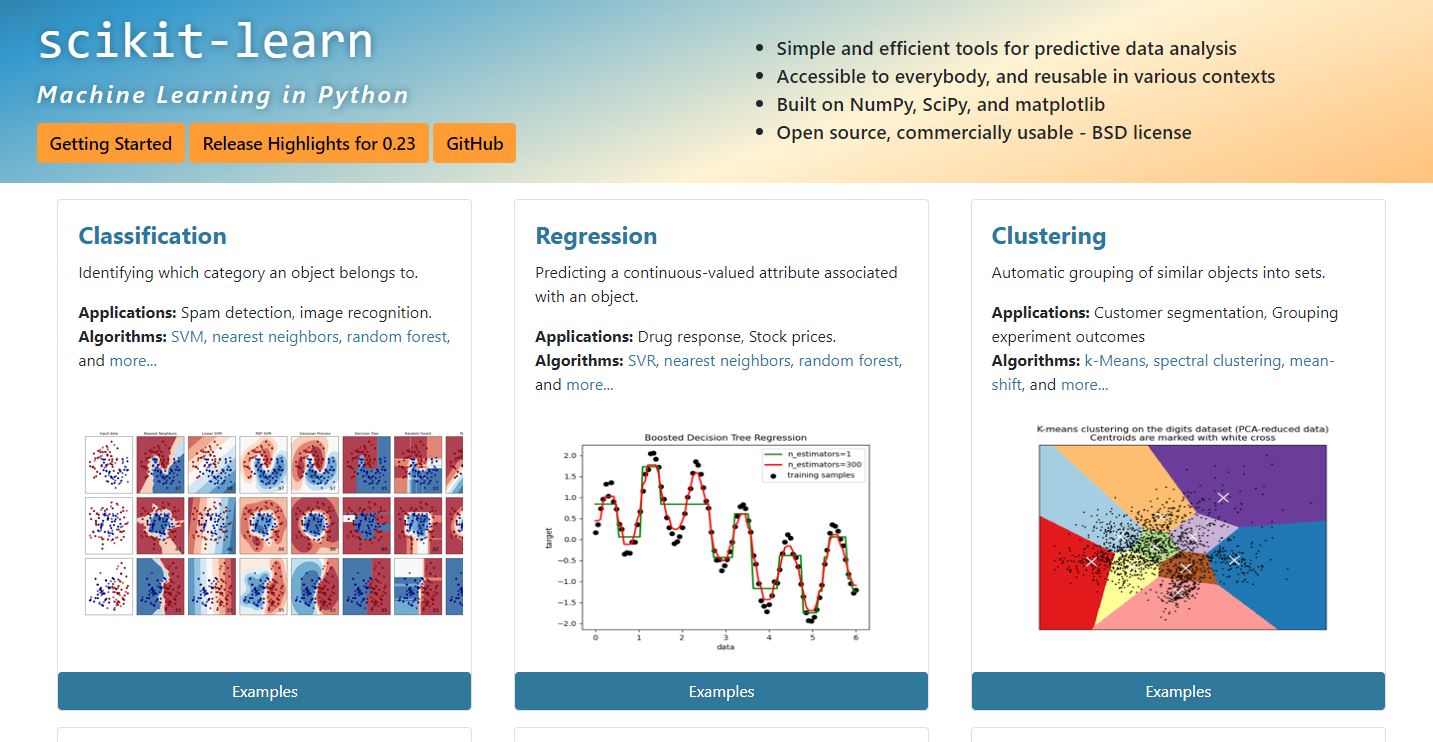

Now, there are special libraries that need to be imported through pip or conda. One of the famous open source libraries out of them is Scikit learn also known as Sklearn.

This is a powerful and heavily preferred library by all machine learning enthusiast. The reason for this library of getting popular among ML engineers is because of the incorporation of each and every Statistical tools present in the same for carrying out ML operations. It contains nearly all the regression as well as classification tools that are used for predictive and prescriptive analysis. Also, many other operations can be performed with the help of this amazing library.

Some of the operations performed with Sklearn (machine learning in python) are listed below:

- Linear Regression: With the help of the Scikit learn library we can perform linear regression on our dataset. This statistical analysis is used when we want to predict our data based on continuous variables. The concept behind this technique is to find the best fit line which will separate our targets based on the closeness with the line.

- Logistic Regression: This is a statistical analysis used to perform predictive analysis on categorical sets of data. The main concept behind this technique is to find the probability of an outcome based on an S-shaped curve with a standard threshold of 0.5.

- Feature Engineering: This is a technique that is used basically to clean our data. The cleaning process involves outlier removals, calculating the normal distribution, mean, median, mode, skewness, standardizing the data, etc. With the help of feature engineering, nearly 90% of our task is done and the rest 10% is completed by doing the predictive analysis part.

- Splitting the data: This helps in splitting our dataset into train, test, and validation. This is mainly used to avoid the concept of overfitting and underfitting of our data. This means that by splitting our data into respective sets the point to reach the global minima is achieved faster and accurately. Otherwise, the data will not be able to predict new points.

- Ensemble Learning: Ensemble learning is a practice adopted in the field of machine learning when we fail to get good results with the help of normal regression and classification techniques. Ensemble learning helps in prediction in a very fast and efficient manner. The base model that is used in ensemble learning is a decision tree. These decision trees are called stumps where each stump contains some of the other kinds of information that need to be predicted. The ensemble learning technique helps to prioritize the weak learners into strong learners by using the concept of boosting. The various types of boosting techniques are Adaboost, Gradient boost, Xgboost, Random Forest, Catboost.

- Support Vector Machine Classification: This is a classification technique that adopts the concept of splitting the data into multiple categories by drawing a line in between them. The point that lies close to the line is predicted accordingly based on the values the line is representing. The concept is somewhat similar to regression as here also we need to draw a separating/best fit line and then use this line to make predictions. This is mainly used to classify categorical features whether ordinal or nominal.

- Support Vector Regression: This is a technique similar to Support Vector Classification. The main difference is that it is used in carrying out regression analysis rather than classification.

There are other techniques also that are performed using Sklearn for regression and classification like Decision tree regression and classification, K Nearest Neighbors regression and classification, K Means Clustering (an unsupervised machine learning technique), Nearest Neighbors, Principal Component Analysis, Anomaly Detection, and many more. Some Deep Learning work can also be done with the help of Sklearn.

Scikit learn Machine learning in Python Installation guide

Conclusion

With the help of this amazing library, Machine learning engineers find this field very much interesting and easy. So, try this library by yourself by installing the same through pip and avail the unlimited benefits under the same.

Related Posts

How to set Gemini by Google as the default Android assistant

Google’s new AI Content Moderation Policy for Play Store Apps

Microsoft’s Smart AI Attendee is Here to Take Your Place in the Office Meetings

How to get ChatGPT responses on Telegram?

9 Limitations of ChatGPT that you should know about

How to Install and Use Github Copilot in JetBrains IntelliJ idea